Data movement is the beating heart of modern data infrastructure. It facilitates seamless information flow across systems to power customer interactions, AI, analytics, and so much more. Powered by Flink and Debezium, Decodable handles both ETL and ELT workflows, eliminating the need for teams to compromise between data processing and movement. Beyond providing a core data infrastructure platform, Decodable addresses the challenge of data movement and stream processing pragmatically, reimagining it in a way that is unified, real-time, and cost efficient. By simplifying the most formidable data infrastructure challenge, Decodable enables teams to focus on their core strengths: innovation and delivering value.

One of the most common data movement use cases is sending data from event streaming systems to a data warehouse for analysis. In this guide, we’ll look at moving data from Apache Kafka to Amazon S3. Kafka is one of the most popular open-source distributed event streaming platforms, designed to handle vast amounts of real-time data and facilitate its transportation and storage. Kafka supports a wide variety of use cases including high-performance data pipelines, streaming analytics, data integration, and mission-critical applications. Similarly, S3 is among the most popular object storage services. Storage systems are optimized for the retention of massive amounts of unstructured data, and are typically used as data lakes and data warehouses to support business applications, photo and video archiving, websites, and more.

Apache Kafka Overview

Event streaming is the practice of capturing data in real-time from event sources, storing these event streams durably for later retrieval, and routing the event streams to different destinations as needed. Kafka is an open-source distributed event streaming platform that is optimized for ingesting and processing streaming data in real-time. The primary functions of Kafka are:

- Publish and subscribe to streams of records

- Effectively store streams of records in the order in which records were generated

Kafka is run as a cluster of one or more servers that can span multiple data centers or cloud regions. Some of these servers form the storage layer, called the brokers. Other servers run Kafka Connect to continuously import and export data as event streams to integrate Kafka with your existing systems such as relational databases as well as other Kafka clusters.

Kafka clients allow you to write distributed applications and microservices that read, write, and process streams of events in parallel, at scale, and in a fault-tolerant manner even in the case of network problems or machine failures.

Amazon S3 Overview

S3 is a fully managed software-as-a-service (SaaS) that provides a single platform for data warehousing, data lakes, data engineering, data science, data application development, and secure sharing and consumption of real-time/shared data. S3 features out-of-the-box features like separation of storage and compute, on-the-fly scalable compute, data sharing, data cloning, and third-party tools support in order to handle the demanding needs of growing enterprises.

The S3 data platform is not built on any existing database technology or “big data” software platforms such as Hadoop. Instead, S3 combines a completely new SQL query engine with an innovative architecture natively designed for the cloud. To the user, S3 provides all the functionality of an enterprise analytic database, along with many additional special features and unique capabilities. S3 runs completely on cloud infrastructure. All components of S3’s service (other than optional command line clients, drivers, and connectors), run in public cloud infrastructures. S3 uses virtual compute instances for its compute needs and a storage service for persistent storage of data. S3 cannot be run on private cloud infrastructures (on-premises or hosted) as it is not a packaged software offering that can be installed by a user. S3 manages all aspects of software installation and updates.

Prerequisites

Using Amazon S3 with Decodable

Before you send data from Decodable into S3, you must have an Identity and Access Management (IAM) role with the following policies (see the Setting up an IAM User section for more information):

- A Trust Policy that allows access from Decodable’s AWS account. The <span class="inline-code">ExternalId</span> must match your Decodable account name.

- A Permissions Policy with read and write permissions for the destination bucket.

Create Connectors

Follow the steps in the sections below to get data from Kafka into Decodable, optionally transform it, and then from Decodable to Amazon S3. These steps assume that you are using the Decodable web interface. However, if you want to use the Decodable CLI to create the connection, you can refer to the Decodable documentation for Kafka and Amazon S3 for information about what the required property names are. Note that the Kafka connector can be used as either a source or a destination, and we’ll be using it as a source for this use case.

Create a Kafka Source Connector

- From the Connections page, select Kafka, leave the default Connection Type unchanged as a source, and complete the required fields.

- Select the stream that you’d like to connect to this connector. Then, select Next.

- Define the connection’s schema. Select New Schema to manually enter the fields and field types present or Import Schema if you want to paste the schema in Avro or JSON format. The stream’s schema must match the schema of the data that you plan on sending through this connection.

- Select Next when you are finished providing defining the connection’s schema.

- Give the newly created connection a Name and Description and select Save.

- Start your connection to begin processing data from Kafka.

Create an Amazon S3 Sink Connector

- From the Connections page, select the Amazon S3 connector and complete the required fields.

- Select which stream contains the records that you’d like to send to Amazon S3. Then, select Next.

- Give the newly created connection a Name and Description and select Save.

- If you are replacing an existing Amazon S3 connection, then restart any pipelines that were processing data for the previous connection.

- Finally, Start your connection to begin ingesting data.

At this point, you have data streaming in real-time from PostgreSQL to S3!

Processing Data in Real-Time with Pipelines

A pipeline is a set of data processing instructions written in SQL or expressed as an Apache Flink job. Pipelines can perform a range of processing including simple filtering and column selection, joins for data enrichment, time-based aggregations, and even pattern detection. When you create a pipeline, you define what data to process, how to process it, and where to send that data to in either a SQL query or a JVM-based programming language of your choosing such as Java or Scala. Any data transformation that the Decodable platform performs happens in a pipeline. To configure Decodable to transform streaming data, you can insert a pipeline between streams. As we saw when creating an Amazon S3 connector above, pipelines aren’t required simply to move or replicate data in real-time.

Create a Pipeline between the Kafka and Amazon S3 Streams

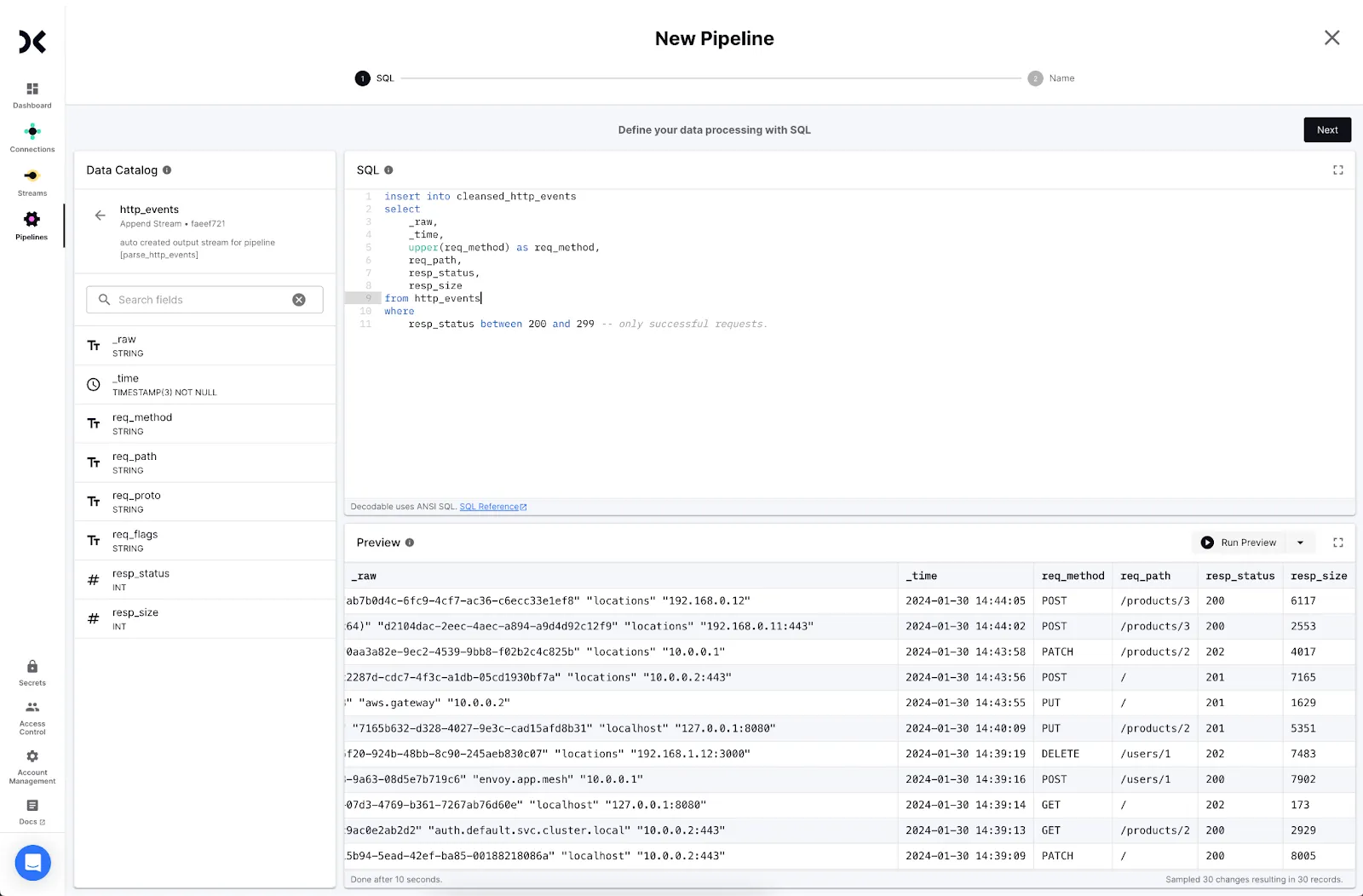

As an example, you can use a SQL query to cleanse the Kafka data to meet specific compliance requirements or other business needs when it lands in S3. Perform the following steps:

- Create a new Pipeline.

- Select the stream from Kafka as the input stream and click Next.

- Write a SQL statement to transform the data. Use the form: <span class="inline-code">insert into <output> select … from <input></span>. Click Next.

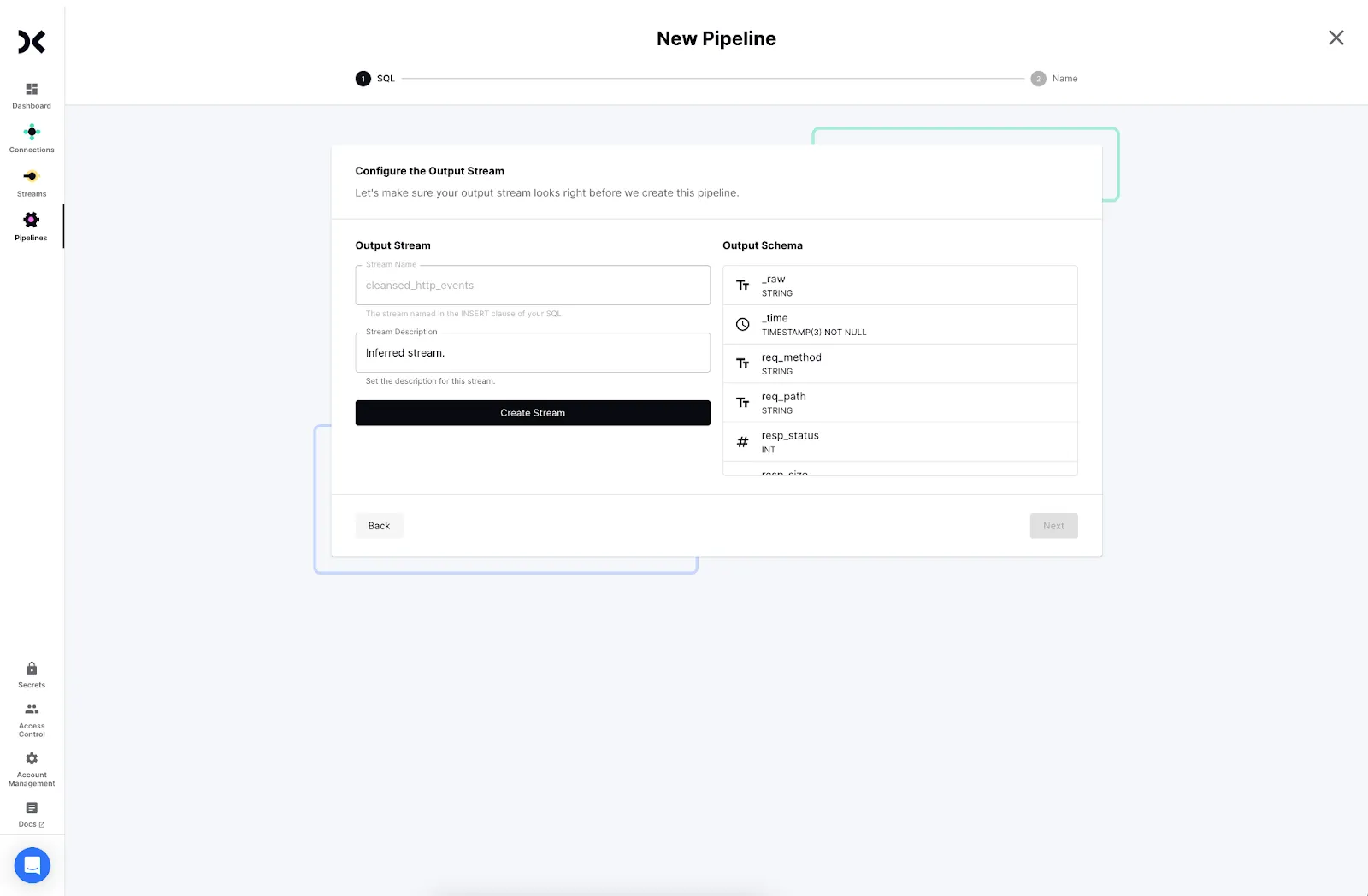

<ol start="4"><li>Decodable will create a new stream for the cleansed data. Click <strong>Create</strong> and <strong>Next</strong> to proceed.</li></ol>

<ol start="5"><li>Provide a name and description for your pipeline and click <strong>Next</strong>.</li><li>Start the pipeline to begin processing data.</li></ol>

The new output stream from the pipeline can be written to Amazon S3 instead of the original stream from Kafka. You’re now streaming transformed data into Amazon S3 from Kafka in real-time.

Conclusion

Replicating data from event streaming systems like Kafka to S3 in real-time helps you to manage storage costs, meet regulatory requirements, reduce latency, and save multiple distinct copies of your data for compliance requirements. It’s equally simple to cleanse data in flight so it’s useful as soon as it lands. In addition to reducing latency to data availability, this frees up data warehouse resources to focus on critical analytics, ML, and AI use cases.

.svg)